| |

|

| How Video Surveillance AI Is “Trained” |

|

|

|

| Emily |

Published: :2026/1/6 |

|

|

|

|

|

| |

|

| |

|

|

| Video surveillance AI is often described as something that can “automatically recognize what’s happening once the system is installed,” as if deploying the hardware and turning on the algorithm is all it takes for video to turn into actionable intelligence. In real-world projects and long-term operations, however, this understanding is far too simplistic. In practice, video surveillance AI behaves much more like a visual system that must be cultivated over time and continuously recalibrated. It is not something you configure once and expect to work forever; changes in environment, usage patterns, and site conditions constantly put the system to the test. |

| |

Before deployment, system designers must first answer a fundamental question: what, exactly, is the AI expected to “understand”? Some sites only require basic people and vehicle detection to support traffic counting or access control. Others aim to go further, identifying intrusions, loitering, abandoned objects, fights, falls, or unauthorized entry into restricted zones. Each objective implies a very different data requirement and training strategy. Only after these goals are clarified can teams decide whether to rely on manual annotation, weak supervision, self-supervised learning, or synthetic data. Even if a model performs well in laboratory tests, recognition accuracy can gradually drift once real-world conditions change. This is why training and deploying video surveillance AI is widely regarded as a long-term, iterative effort rather than a one-time delivery. The industry’s growing focus on MLOps (Machine Learning Operations) reflects this reality.

Step One: Where the Data Comes From

1. Real-world site data: the most effective and the most expensive

For surveillance AI, the most valuable data is always footage that most closely resembles the actual deployment environment: the same camera height and focal length, similar backlighting conditions, ground materials, rain and fog, nighttime illumination, uniform styles, and people or vehicle density. The reason is straightforward. Vision models are highly sensitive to data distribution. A model trained in a warehouse may see its performance drop sharply when deployed in a train station or hospital. This is a textbook example of domain shift and data drift in practice.

2. Public datasets: building a baseline

In many projects, public datasets are used first to establish basic visual capabilities, which are then refined with site-specific data. Large-scale datasets such as COCO (Common Objects in Context) provide widely used annotations for tasks like object detection and segmentation and have long served as a benchmark in computer vision. You can think of this step as teaching the model to “generally understand the world” before teaching it to understand your specific site.

3. Simulation data and digital twins

When certain scenarios are rare or difficult to capture—such as specific fence-climbing angles, uncommon safety incidents, or specialized PPE recognition—synthetic data becomes an increasingly common supplement. Simulation tools or digital twins can generate images along with automatic annotations such as bounding boxes, masks, depth, and pose information. These synthetic datasets help expand training coverage and improve model stability in edge cases. NVIDIA, for example, has publicly outlined workflows that move from simulation to training and fine-tuning, and then to deployment, positioning synthetic data as a core pillar of modern visual AI development.

Step Two: Annotation

Most surveillance applications still begin with supervised learning, where images are paired with correct labels. The form of annotation largely determines what the model can learn:

Bounding boxes allow the model to detect people and vehicles, count objects, and identify zone intrusions.

Multi-frame boxes or consistent IDs enable tracking, path analysis, and dwell-time estimation.

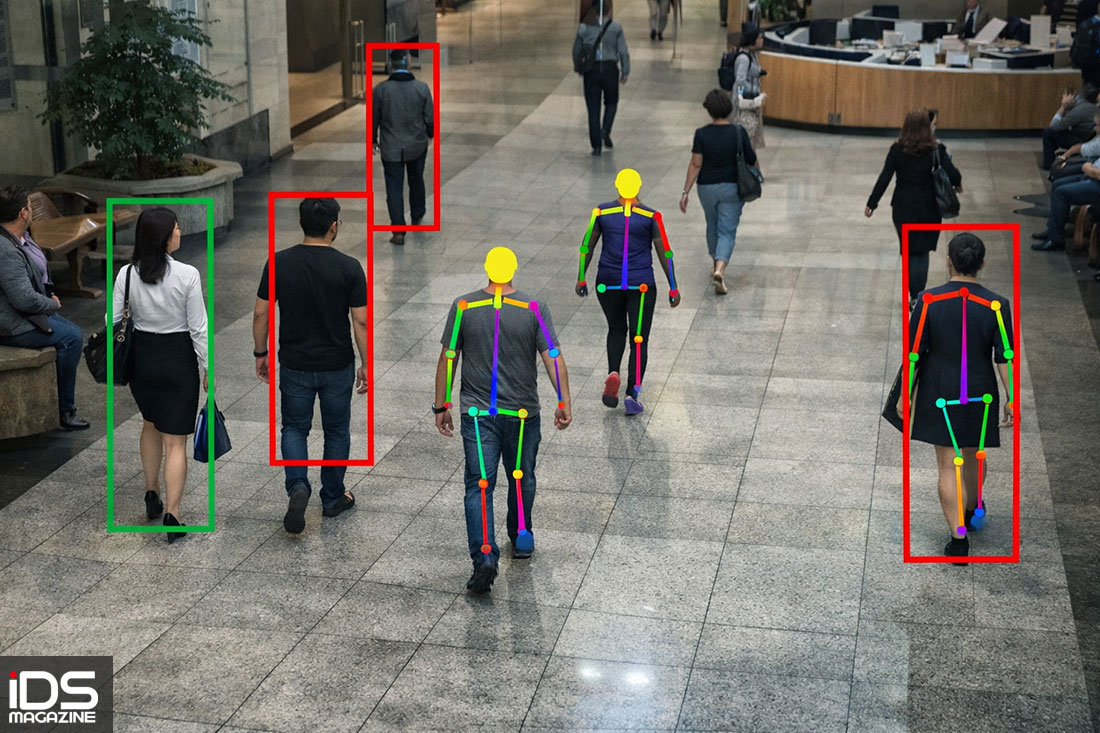

Skeleton or pose annotations make fall detection, fights, and hazardous actions feasible.

Clear event start and end times are essential for behavior recognition and event detection.

Annotation quality often matters more than algorithm choice. The COCO dataset, for example, uses staged annotation and validation processes to maintain consistency at scale. The same principle applies in surveillance deployments. If the definition of “line crossing” is vague, restricted zones change frequently, or annotators interpret “loitering” differently, the model will ultimately learn an ambiguous average—and false alarms are almost inevitable once the system goes live.

At the most basic level, an AI surveillance system begins with object detection, separating people, cars, motorcycles, or packages from the background and giving structure to the video stream. Tracking mechanisms then maintain identity consistency across frames, allowing the system to treat a moving target as the same entity over time, even across multiple cameras. Only on top of this foundation can higher-level analysis attempt to understand behaviors and events such as line crossing, restricted-area entry, prolonged presence, crowding, running, or collapse. These judgments are no longer about “what is visible,” but about the combined interpretation of time, location, and movement patterns. Among these tasks, anomaly detection is widely considered the most challenging.

Abnormal behavior is difficult to define exhaustively, labeled data is scarce and inconsistent, and real-world incidents occur infrequently, making this category one of the hardest problems in both academic research and industrial deployment.

Step Three: Training Strategies

When it comes to training, nearly every surveillance AI challenge ultimately traces back to data. Experience consistently shows that the most valuable data comes from footage captured by the actual cameras in the deployment environment, not from idealized demo videos. Camera height, lens selection, backlighting, ground surfaces, weather conditions, nighttime lighting, crowd density, vehicle flow, and even clothing styles all shape how a model interprets a scene. Vision models are extremely sensitive to data distribution, so it is not unusual for performance to degrade significantly when a model trained in a factory or warehouse is moved to a transportation hub or medical facility. This is the real-world manifestation of domain shift and data drift.

To establish baseline capabilities, industry practice commonly combines public datasets with early-stage training, then fine-tunes the model using site-specific data. Datasets like COCO have long served as benchmarks for object detection and segmentation, not because they directly match surveillance use cases, but because they provide diverse, consistent visual samples that help models learn general visual representations. When real-world data is insufficient or certain scenarios are exceptionally rare, synthetic data and digital twins play a crucial complementary role. By generating images and precise annotations in simulated environments, teams can accelerate learning and validation for specific scenarios. This approach has become an integral part of modern visual AI pipelines.

From Full Re-annotation to Weak Supervision and Active Learning

Weakly supervised learning: when only coarse labels are feasible

For anomaly detection, frame-by-frame or second-by-second annotation is often prohibitively expensive. As a result, researchers have proposed weakly supervised approaches in which annotators only label whether a video segment contains an anomaly, without specifying the exact timing. Techniques such as Multiple Instance Learning (MIL) are then used to infer the likely location and severity of anomalies. This has become one of the most representative directions in surveillance anomaly detection research.

Active learning: spending annotation budgets where it matters most

Annotation is always a cost sink. Instead of labeling everything, many teams train an initial model and let it identify samples where it is most uncertain or most prone to error—such as backlit scenes, heavy occlusion, rain at night, or reflective surfaces. These samples are then prioritized for annotation and used to train the next model iteration, forming a closed feedback loop. In practice, this is how active learning is often implemented in visual AI projects, achieving faster performance gains with fewer labeled samples.

In the early stages of most surveillance projects, supervised learning remains the most common approach. Images and ground-truth labels are provided together, and the annotation format directly constrains what the model can learn. With only bounding boxes, the model is typically limited to basic detection and zone intrusion. Adding consistent identity tracking enables trajectory and dwell-time analysis. Incorporating pose or skeleton data makes fall detection and hazardous action recognition feasible. Clearly labeled event boundaries allow more complete behavioral understanding. Repeatedly, practical experience shows that annotation quality outweighs algorithmic sophistication. Just as COCO relies on multi-stage annotation and validation to maintain consistency at scale, surveillance systems suffer when definitions are unclear, boundaries shift, or annotators disagree—resulting in persistent false alarms after deployment.

As scenarios grow more complex, training strategies are evolving. In anomaly detection, fine-grained labeling is not only costly but difficult to sustain, pushing both researchers and practitioners toward weak supervision. By labeling only whether a video contains an anomaly and using techniques like Multiple Instance Learning to estimate its timing and intensity, systems can continue improving under limited annotation resources. In parallel, active learning is increasingly integrated into production workflows, allowing scarce annotation budgets to be focused on samples that most affect performance and accelerating iteration cycles.

Validation and Metrics

At the validation stage, surveillance AI faces challenges very different from those in academic research. Metrics like mAP are useful references, but in real deployments, false alarms are what most directly determine user acceptance. In electronic perimeter systems, for example, swaying tree shadows, rain reflections, animals, falling leaves, or patrol staff being misclassified as intrusions can quickly erode trust. As a result, practical validation must cover day–night transitions, weather changes, camera angle differences, crowd and traffic density, compression settings, and even specific operational time windows to reflect real usage conditions.

Data Drift and Long-Term Operations After Deployment

Once a system goes live, the real challenges begin to surface. Lighting upgrades, camera aging, focus drift, plant growth, changes in movement patterns, and even seasonal shifts in clothing can gradually push real-world footage away from the original training data, leading to data drift. Mature deployment strategies therefore treat surveillance AI as a system requiring long-term maintenance. Continuous performance monitoring, periodic retraining, version updates, and revalidation are essential to sustaining reliability over years of operation. This is the core philosophy behind MLOps and a key determinant of whether a system remains usable over the long term.

Training and deploying surveillance AI is less like installing a finished product and more like educating a student over many years. The process spans data collection, cleaning, annotation, training, validation, deployment, monitoring, and re-collection. Synthetic data and weak supervision help reduce annotation costs and data scarcity, while active learning and MLOps enable surveillance AI to evolve from proof-of-concept into durable, maintainable infrastructure.

Notes

MLOps: Short for Machine Learning Operations, referring to the practices used to manage, deploy, and maintain AI models in production.

mAP (mean Average Precision): A common accuracy metric in computer vision, especially for evaluating object detection performance.

Domain Shift: A mismatch between training data and deployment environments that degrades recognition performance.

Data Drift: The gradual change in input data distribution over time after deployment, often driven by environmental or operational changes.

|

| |

| |

※The text and images in this article may not be reproduced without authorization. For licensing inquiries, please email contact@aimag.tw — [iDS Magazine Statement]※ |

|

|

|

| |

|

| |

|

|

|

| |

|